Getting Started with DeepRacer on AWS (Intro to Machine Learning on Cloud)

Ensono

What is AWS DeepRacer?

AWS DeepRacer is a concept project for a self-driving car in 1/18th scale of a real vehicle. The original idea of AWS DeepRacer was to integrate an RC car with DeepLens and create an integrated learning system (iLs) for users, enabling them to learn and discover a basic supportive “reinforcement learning” mechanism.

AWS DeepRacer is powered by the AWS SageMaker and AWS RoboMaker services. Additionally, users can integrate with other services like AWS Kinesis (amongst many others) in order to do real-time video and data stream analysis. In this blog, I will walk you through how to set up and deploy your first AWS DeepRacer project.

AWS DeepRacer offers a great opportunity to learn and understand basic principles of Machine Learning using AWS SageMaker and how to create robotics related software applications in a cloud environment using AWS RoboMaker.

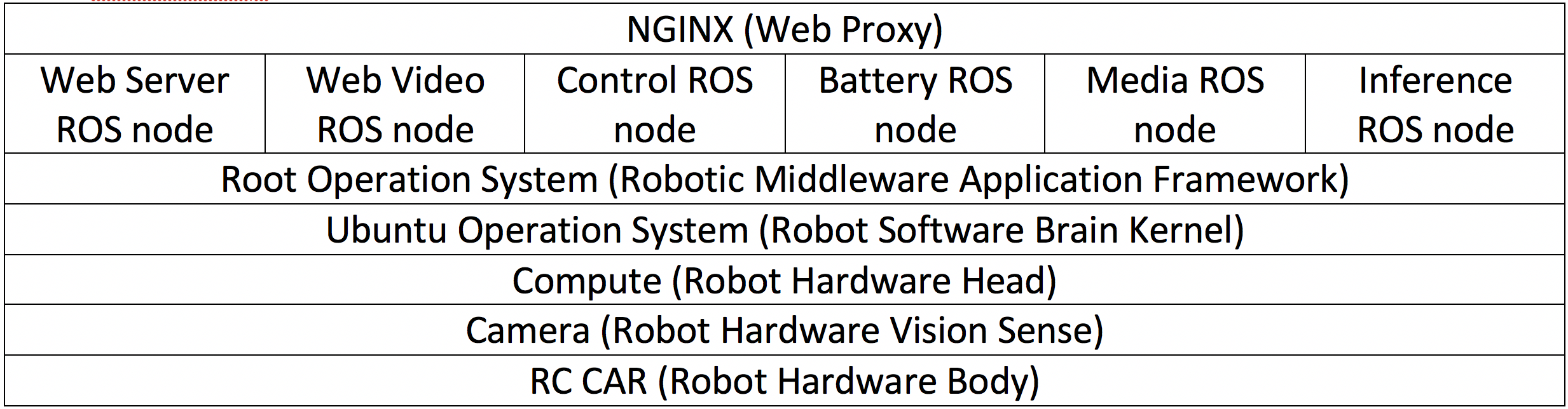

DeepRacer is comprised of the following three major components:

AWS DeepRacer Console

This is the GUI you will use to interact with AWS DeepRacer platform on AWS.

AWS DeepRacer Vehicle

The RC small scale car vehicle is well equipped with sensors such as a front-facing camera, stereo cameras, LIDAR, accelerometer, and gyroscope. These sensors gather the data related to the environment where the vehicle is to be operated.

AWS DeepRacer League

This is where users can compare their skills with other AWS DeepRacer developers in virtual or physical racing events.

The following AWS Cloud Services are required to use DeepRacer:

- S3 (model storage here)

- AWS DeepRacer console GUI (Reward function configuration)

- ECR Image Intel RL Coach PPO algorithm (Deep Learning architecture set)

- Amazon SageMaker (Pipeline for training, build model)

- Amazon RoboMaker (ROS and Gazebo simulation)

Getting Started with DeepRacer

To start with AWS DeepRacer, follow the next steps to start training “reinforcement learning model” RLM for a vehicle equipped with particular sensors in order to analyze these trained models for determining the initial performance.

If you have never used DeepRacer before on an AWS account, you will need to create an account resource. This will ensure that the user account has all the necessary permissions to the AWS services used by DeepRacer.

- Sign into your AWS account and go to AWS DeepRacer

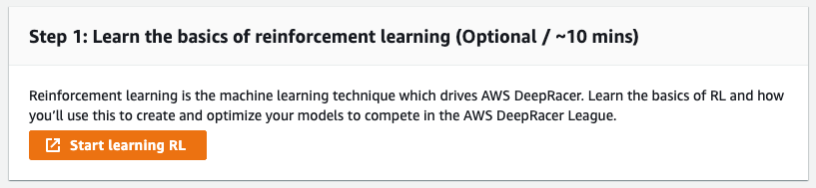

- On the start, choose “Get started” from the service landing page or click on the “Get started with the reinforcement learning” from the main navigation page. (This is an optional but highly recommended step for basic RL training)

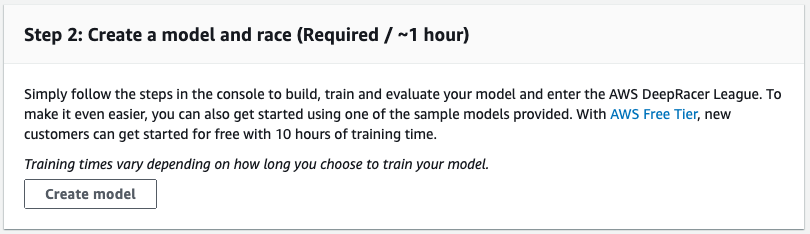

- After completing the RL basic training, navigate to “Create a model and race” section and then click “Create model”. Alternatively, user can choose “Your models” option on the AWS DeepRacer home page from the navigation pane.

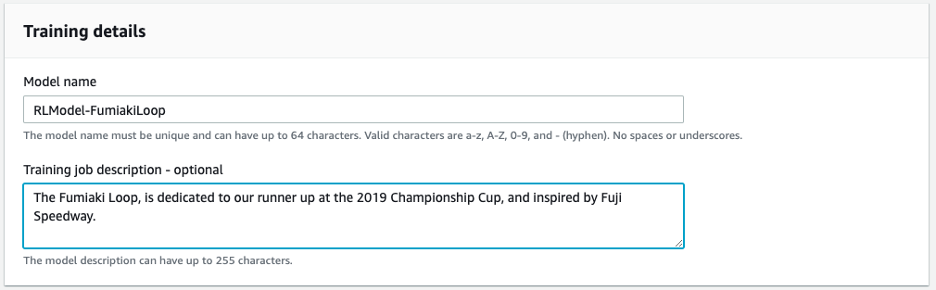

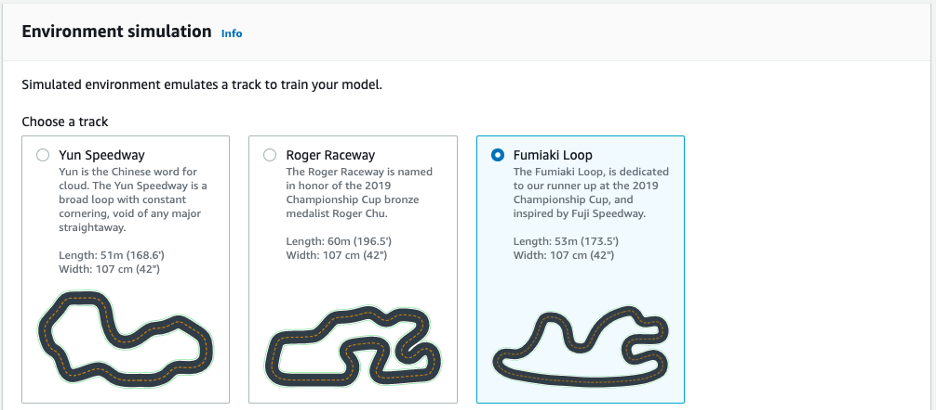

- From the “Training details” section, enter a model name (this model name is used as a reference model while submitting it to the leaderboard of AWS sponsored racing events or while cloning.), select the track on which you wish to begin training your DeepRacer.For the first run, select a track with a simple outline and smooth turns. For later iterations, you can choose more complex tracks to gradually improve the models. To train a model for a specific racing occasion, always select the track that is most similar to an event track.

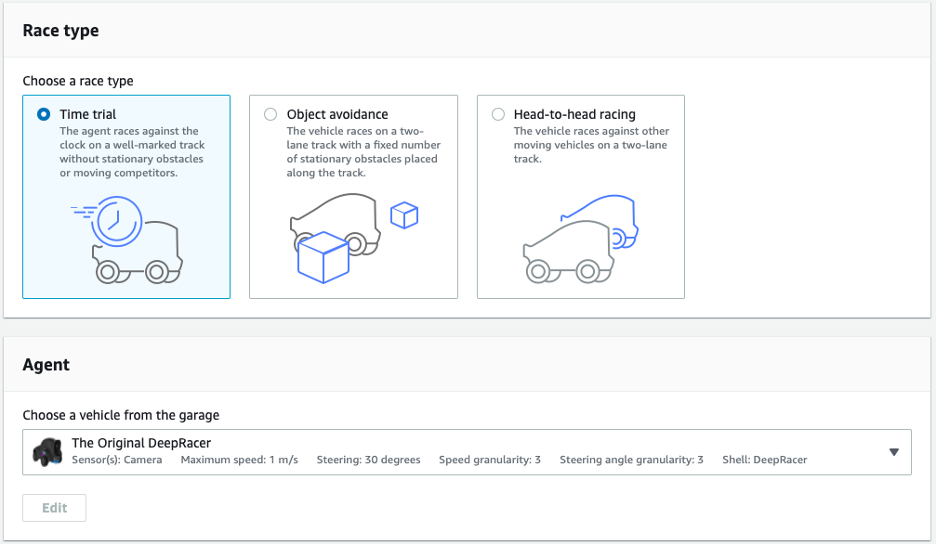

- Select “Next” and choose the race type here, as well as the car to be used from your garage. It would be best if first time users select “Time trial”, as it deploys the default sensor for configuration with a single camera.

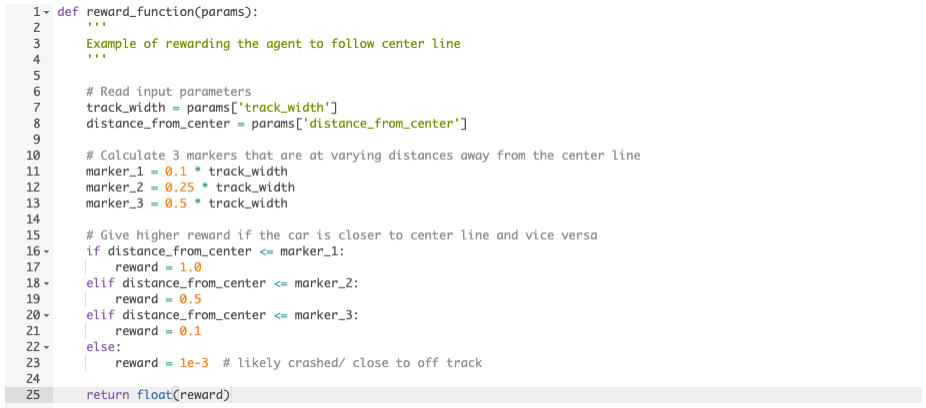

- Next you need to create a Reward Function. A Reward Function is the immediate feedback received by the vehicle when it moves from one point to another through the track. The function will check the state of the vehicle during the training process and returns rewards to demonstrate module performance. Higher rewards indicate better module performance.

The following is an example of python code that shows the distance of the agent from the central line on the track and gives higher rewards if the agent is moving closer to the center line.

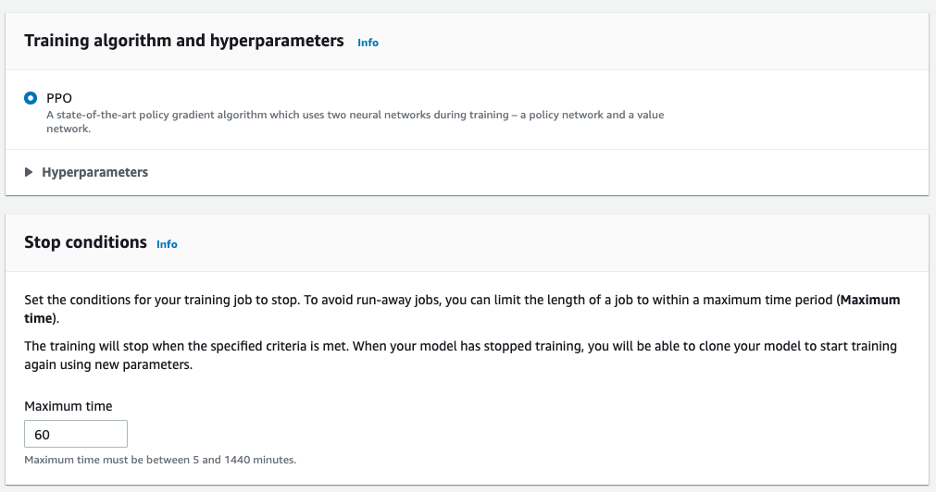

- Next, we need to implement the reinforcement learning algorithm. AWS chooses the Proximal Policy Optimization (PPO) algorithm for the DeepRacer environment (for more information on PPO: see https://openai.com/blog/openai-baselines-ppo/). When choosing the deep-learning framework, it’s recommended to use a popular framework such as TensorFlow.

DeepRacer supports the PPO algorithm, usually because the policy gradient methods have convergence problems which are addressed by the natural policy gradient. Some things to be aware of are:

- Training is extremely sensitive to hyperparameter tuning

- Outlier data tended to ruin training

- In the final step, AWS DeepRacer will create a new model with a user configured parameter.

Model training is where you measure its convergence behavior. This is accomplished by continuing the number of trials on a selected track with the agent moving on the track according to the actions programmed by the trained model.

For analytics and logging, DeepRacer sends events to CloudWatch Logs during training and evaluation.

Here is where you can find the different logs based on job-type:

- For training job: under “/aws/sagemaker/TrainingJobs” log group.

- For simulation job: under “/aws/robomaker/SimulationJobs” log group.

- For evaluation job: under “/aws/deepracer/ leaderboard/SimulationJobs” log group.

- For reward function execution: under “/aws/lambda/AWS-DeepRacer-Test-Reward-Function” log group.

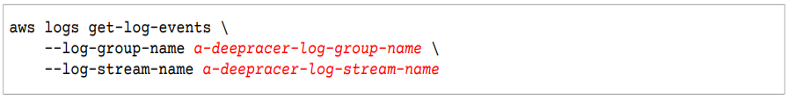

To access your logs using the AWS CLI

1) Open your Terminal window and type the following:

The output will be in json format, you can automate to process using the AWS SDK.

After training has been completed, you can upload an artifact to the DeepRacer vehicle and test on your track.

There are many factors that can affect the performance of the car on the track. To train and improve the performance requires several iterations of exploring and change reward functions, hyperparameters, and more. If you want to test your model, try AWS DeepRacer League and find a race near you.

Today, more and more companies are adopting machine learning and data sciences skills to generate valuable answers for their businesses through their data. If you are interested in building these types of skills, AWS DeepRacer is a great and fun way to learn the fundamentals of Machine Learning and AI.

Social Share

Don't miss the latest from Ensono

Keep up with Ensono

Innovation never stops, and we support you at every stage. From infrastructure-as-a-service advances to upcoming webinars, explore our news here.

Blog Post | May 5, 2026 | Technology trends

We’ve Seen This Movie Before: What Cloud First Taught Us About AI First

Blog Post | April 16, 2026 | Industry trends

Why Modern Private Cloud Is Back—and Why That’s a Good Thing

Blog Post | April 16, 2026 | Industry trends